Newsletter | Should Dunkin’ Be Generally Recognized as Safe?

HHS Secretary Robert F. Kennedy, Jr. went viral recently when he suggested that he was going to ask companies like Dunkin’ (nee Dunkin’ Donuts) and Starbucks for safety data proving that it’s “okay for a teenage girl to drink an iced coffee with 115 grams of sugar in it.” When a Boston Globe reporter picked up the comment and ran a story on it, New Englanders rebelled. After all, Dunkin’ is, for better or for worse, a New England institution. Just ask famous Bostonian Ben Affleck.

But while RFK Jr. tends to be wrong virtually every time he speaks about any public health issue, he might not be wrong about this one. He was referring to a policy lever that he can use at the Food and Drug Administration – the rule on adding ingredients that are “generally recognized as safe.” The GRAS rule allows food and beverage manufacturers to add ingredients to their products without pre-market review by the FDA if they are – you guessed it – “generally recognized as safe.” The rule was originally created to avoid lengthy review processes when adding common ingredients like salt and vitamin C, but critics have argued that over time it has enabled the proliferation of ultraprocessed foods (UPFs). RFK Jr. is reviewing GRAS in response to a petition by former FDA commissioner David Kessler to revoke GRAS exemptions for a list of ingredients commonly found in UPFs.

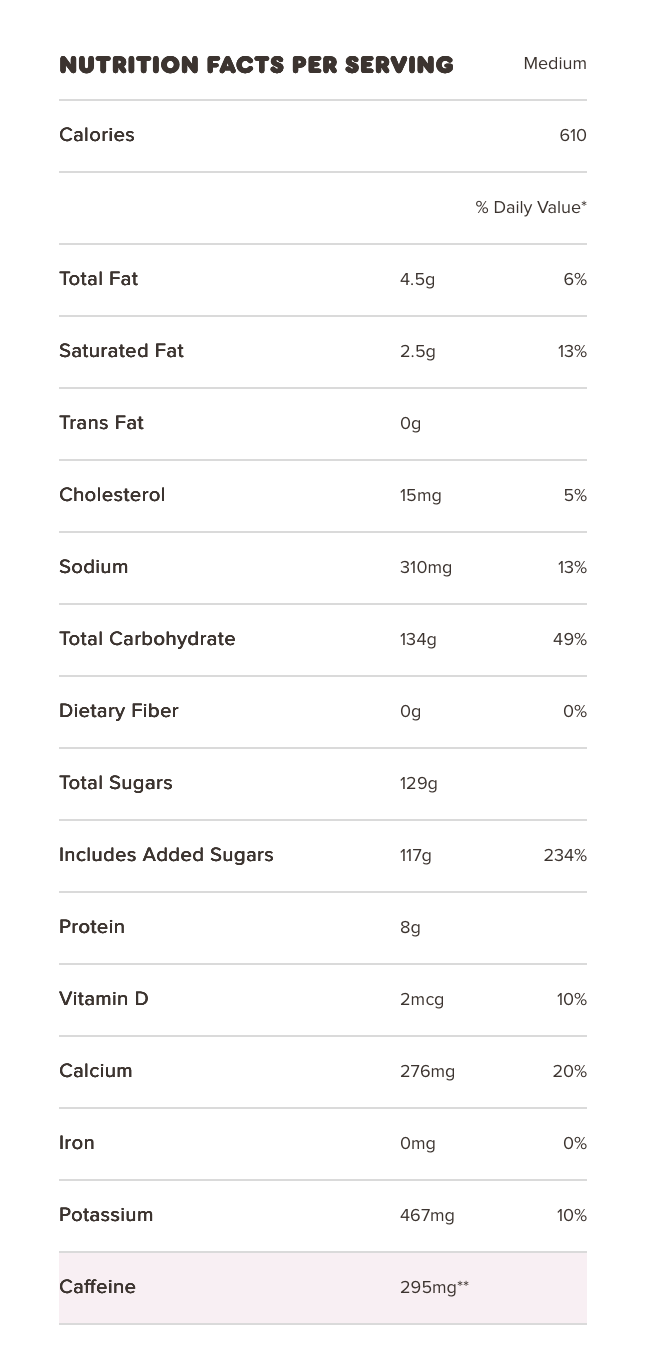

The example cited – the sugary iced coffee – raises some interesting questions. If you look at Dunkin’s menu, you’ll see that the default frozen coffee, which comes with whole milk and “butter pecan swirl” flavoring, contains 117 grams of added sugars, which is about 234% of the recommended daily allowance in the most recent Dietary Guidelines for Americans. (Let's leave off the 600 calories for this discussion.) It would be hard to argue that a little bit of sugar here and there is “unsafe” – after all, sugar is something of a staple in any diet. But 117 grams in one drink obviously isn’t good. The question is whether it should be considered “unsafe.”

For the GRAS rule, FDA defines “safe” as “a reasonable certainty in the minds of competent scientists that the substance is not harmful under the intended conditions of use.” “Harmful” is of course a tricky word here but there is evidence that consuming certain volumes of sugar-sweetened beverages (SSBs) each day is associated with increased risk of developing type 2 diabetes. By our own calculations, drinking one medium Dunkin’ frozen coffee each day – and no other SSBs – would lead to a 37% increased risk for T2D (as compared to drinking no SSBs). Sounds pretty harmful. (Others apparently find different kinds of harm – see Your salted caramel mocha latte is destroying society.)

But the phrase “under intended conditions of use” does a lot of work in that definition and it highlights the challenge of regulating products that cause harm when consumed at certain levels, but not at others. The harm does not come from one drink of frozen coffee – it comes from the aggregate consumption of products like frozen coffee (or other products high in added sugars) over time. So the harm that comes from the product depends on the context of how one uses it: an occasional frozen coffee in an otherwise healthy diet that is low in sugars probably won’t cause any harm at all. But if the frozen coffees are a daily treat and part of a poor diet that is otherwise high in sugars, you can certainly argue that the product contributes to a significantly added risk of harm.

You also have the issue that the frozen coffee is but one product sold by Dunkin’, which is but one of many companies that might play a role in someone’s diet. Consider a thought experiment in which one restaurant chain is solely responsible for a given person’s diet and that diet is extremely unhealthy and inevitably leads to diet-related chronic illnesses. We would probably consider that chain largely responsible for the person’s resulting illness. Now imagine a different scenario where there are 1000s of restaurants with identical menus that collectively provide 100% of the person’s food intake, but no one of them provides more than 1% of that intake. We would probably be loath to assign any responsibility to any one restaurant because they all played such small parts in the person’s diet. (We might also choose to blame the person for their dietary choices, but we dealt with that in our last issue!). It’s also hard to hold companies accountable in today’s environment because so many of them are largely interchangeable. If one were to, say, eliminate all McDonald’s restaurants, one would expect that Burger King and Wendy’s would gain more customers – not that all the McDonald’s meals would be replaced by fresh, healthy, home-cooked meals.

“Intended” use opens up another can of worms and raises the question of what to do when actual use – at least among many customers – is quite different. What might Dunkin’ say about the intended use of its frozen coffee drinks? If they have to make the case that the drink isn’t harmful, they might have to claim that it’s intended to be consumed infrequently, say once a week. But then that raises the question of what to do when the actual use by consumers differs quite a lot from the “intended” use.

Driving cars is an interesting case study. Automobile manufacturers would argue that automobiles are not intended to be used when the driver is inebriated. And yet 32% of all auto-related fatalities involve a drunk driver. Speeding comes in a close second at 29% and likewise, we’re sure that the car companies would say that drivers are intended to obey the posted speed limits.

If the provider of the service (or manufacturer of the product) knows that it’s often not being used as intended – and that such use is harmful to the users and/or others, what is their responsibility? Should they monitor use data to identify any misalignment between “intended” use and actual use? Should they offer nudges, warnings, or other interactions to discourage what might be considered “problematic use”? And what if those don’t work? (Our own research for the Building H Index, for example, showed that giving users optional tools to limit their time on social media has minimal impact.) Should they modify the product to prevent any harmful use? After all, cars could employ breathalyzer ignition interlocks to prevent drunk driving or speed governors to eliminate speeding. Instagram could literally lock you out after you exceed whatever daily limit is considered safe. Maybe they should be responsible for preventing the harms from unintended use? Maybe not. Or perhaps, at least, they might be required to refrain from design strategies like infinite scroll that, ironically, are clearly intended to facilitate or induce “unintended” use.

Of course the harmfulness of a product can also be highly dependent on any given individual’s reaction to using it. Not everyone who drinks develops an alcohol dependency, but some certainly do. And sometimes, the particular mix of product and consumer is literally lethal. We’re now seeing cases of people who went down some deep rabbit holes with their AI chatbots, with disastrous results. (See Man Fell in Love with Google Gemini and It Told Him to Stage a 'Mass Casualty Attack' Before He Took His Own Life: Lawsuit for a particularly bizarre and tragic example.) Executives at AI companies have pledged to do better, particularly as it relates to the chatbot experience for minors, but their responses largely pale in comparison with Johnson & Johnson’s response to the Tylenol crisis of 1982. When seven people died from taking pills that had been laced with cyanide, the pharma giant recalled 31 million bottles, took the product entirely off the market, and only restarted sales once they’d figured out how to distribute the pills in tamper-proof bottles. Alas, that was a simpler problem, a simpler solution, and perhaps a simpler time.

In addition to the questions of defining harm, defining intended use and establishing responsibility, the Dunkin’ episode also highlights the challenge of making policy when the companies whose products might actually be harmful are beloved. Sure Dunkin’s contribution to the food environment doesn’t exactly bathe the company in glory, but a loyal New Englander might argue that they’re our Dunkin’, they’re on our team, and they’re all right. (It’s like all the surveys that show most Americans disapprove of Congress but approve of their own Congressperson.)

Product regulation is often about determining when a product crosses some line of harm and then banning it – or having it reformulated to fall shy of that line. This approach makes sense in some product contexts: AI chatbots should not persuade people to harm themselves – full stop. An alternative approach – which might be especially appropriate when it comes to food, beverages and diet-related illnesses – is to hold companies accountable for their contribution to the environment that shapes behavior. Dunkin’ might not be responsible for even one percent of Americans’ food and drink intake, but to the extent that Dunkin’s contributes a relatively unhealthy share of what is, on average, a largely unhealthy national diet, maybe they’re responsible for a small share of diet-related illnesses – and the enormous health care costs that result from treating them. It’s a radical idea, but short of creating such proportional accountability, we’re left with the status quo, where a company can profit from participating in the creation and ongoing provision of an unhealthy food environment and yet evade responsibility because they are just one of many doing the same.

The weird marriage of RFK Jr.’s ideologically and scientifically incoherent vision with the MAGA movement into MAHA creates a certain amount of cognitive dissonance among many politicians today (if that’s even still a thing). As Helena Bottemich-Miller pointed out in her summary of the Dunkin’ episode, “Now we’re seeing the left yell about the nanny state using Tea Party and Second Amendment symbols?” Huh? It’s all a bit scrambled. But maybe this disorientation can become an opportunity by enabling people to break free of entrenched positions and open up to new solutions that, while the confusion reigns, aren’t yet labeled as belonging to one side or another.

Read the full newsletter.